Edge AI Overview

01/11/2021, hardwarebee

We would not have predicted Artificial Intelligence to be where it is now ten years ago. It is becoming a vital component of various industries and even customer products and gadgets. The moment has arrived to take the next step and edge AI is the new frontier.

Edge devices are hardware components that govern the flow of data at the boundaries of two networks and act as points of entry (or departure) in the grid. Edge equipment is used for transmitting, traveling, processing, monitoring, filtering, translating, and storing information between networks and suppliers of services within companies. Just a handful of edge devices included routers, routing switches, built-in devices, multiplexers, IoT devices and a broad network or network access device.

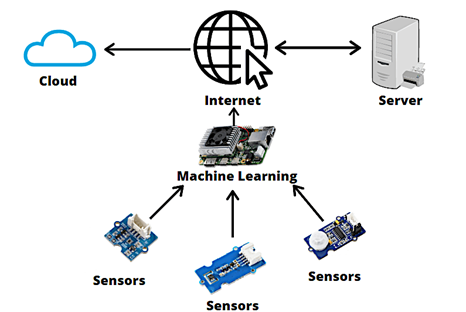

The processing of Artificial Intelligence algorithms on the edge, that is, on the devices of users, is known as edge AI. The concept is derived from Edge Computing, which is based on the same premise: data is stored, processed, and managed directly at IoT endpoints. As a consequence, the device does not need to be linked to Edge AI because the data is processed for Machine Learning purposes immediately on the device. They may also use Deep Learning models and sophisticated algorithms on their own.

How does Edge AI work?

Edge AI is the artificial intelligence algorithm processing system. The notion comes from Edge Computing: Data is stored, processed, and managed on the Internet of Things (IoT) directly.

Therefore, it is not essential to connect the device to the cloud since data is immediately processed for learning. Sophisticated Deep Learning and algorithms can also be used separately.

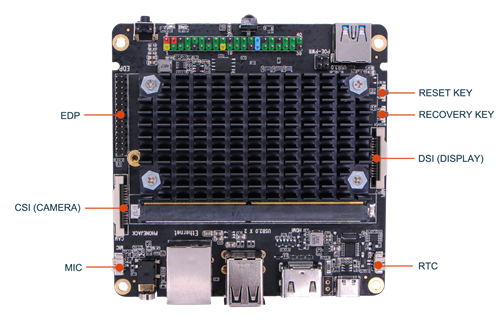

For deep learning models, AI processing is typically performed on a cloud-based data center because it requires significant processing capacity. However, AI processing puts part of the AI process onto an Edge AI device and keeps data limited to this device (Figure-1):

Figure – 1: The Rock Pi N10 is the latest addition to the Rock Pi series, for the processing of artificial intelligence and deep learning

Edge AI Architecture

Solutions typically comprise the construction of the analytical model, model usage, and model implementation. Decisions on data collection, data preparation, selection of the algorithm, ongoing algorithm training, model deployment/redeployment, and other tasks need to be made within each of these domains. Capacity edge processing/storage is essential as well. Some techniques use decentralized models of implementation from peer to peer, each having advantages and disadvantages. The only way to achieve this is through:

- Build your own CNN or begin with a pre-trained CNN network

- Get training and test information (images and labels).

- The network will be trained or recruited (i.e. transfer learning).

- Optimize for the required accuracy using learning rates, batch size, etc.

Benefits of Edge AI Devices

There are numerous hardware options for AI-edge processing, including CPUs, GPUs, ASICs, FPGA, and SoC accelerators, depending on the AI application and device class. Many Artificial Intelligence cases are best made with edge devices, with maximum availability, security of data, decreased latency, and cost optimization.

Latency

The most obvious benefit of processing information at the edge is that data transmission to and from the cloud is no longer required. As a consequence, data processing latencies can be substantially decreased.

An Edge AI-enabled device would be able to respond very instantly, for example, by shutting down the compromised machine. We would lose at least a second of data transfer to and from the cloud if we employed cloud computing instead of the machine learning algorithm. While that may not seem like much, when it comes to mission-critical equipment, every margin of safety that can be obtained is typically worth pursuing.

Reduced Bandwidth Requirement and Cost

The network bandwidth demands and expenditures will be lowered with less data flow to and from edge IoT devices.

Consider a problem of picture categorization. The full image must be supplied for online processing as computing is employed. But if edge computing were used, it would not be necessary for that data to be sent. We may instead offer the processed result, which is often less than the raw image in many orders of magnitude. If we compound the impact of hundreds or more of IoT devices on the network, savings are significant.

Increased Data Security

Reducing data transmission to other sites also means fewer open connections for cyber-attacks. This reliably prevents edge devices from being intercepted or data breaches from occurring. Furthermore, because the data in the centralized cloud is no longer stored, the impact of a single breach is considerably reduced.

Improved Reliability

The distributed nature of Edge AI and edge computation can potentially disseminate operational dangers throughout the whole network. By Multiple edge devices can sustain their functions while the central cloud or cluster fails! This is particularly important for critical IoT applications in healthcare.

Automated decision-making

There are hundreds of sensors in a self-driving car that continuously detect the vehicle’s position and pull speed. The driving computer can make appropriate judgments regarding steering, braking, and throttle use based on the data collected by the sensors.

Real-time analytics

It is feasible to get near real-time analytics with edge computing. Analysis occurs in a fraction of a second, which is important in time-sensitive circumstances. Consider machinery on an industrial assembly line. If a robot on the assembly line is triggered at the incorrect moment or too late, the product may be destroyed or may proceed further down the manufacturing line unprocessed and undisturbed. If the error remains undiscovered, the defective product may wind up on the market or cause harm later in the manufacturing process.

Challenges

Edge AI benefits from cloud-based AI, but it’s not without problems. Maintaining the data locally means more secured areas, with greater physical access that enables different kinds of cyber assaults. Because calculation capability is constrained at the edge, it is possible to execute a limited number of AI activities. Generally, large sophisticated models need to be simplified before they can be installed on AI hardware, which in some cases lowers their accuracy.

These are some of the trends that motivate businesses to go forward. According to a Gartner forecast from 2019, more than half of large organizations would adopt at least a one-sided IoT or immersive scenario by the end of 2021, up from less than 5% in 2019. Gartner predicts that by the end of 2023, more than half of large organizations will have deployed at least six edge computing cases. The number of edge computing use cases is likely to increase even further in the coming years.

The benefits of Edge AI are a logical business decision for many companies. Insight forecasts that smart borders will have an average ROI of 5.7 percent during the next three years. AI at the edge may save costs and improve scalability, confidence and speed in sectors such as automotive and medical care, manufacture and even more realistic.

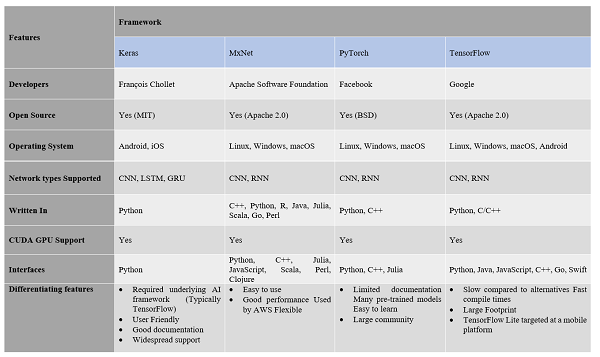

Choosing the right Edge AI framework

Below we’ve compared the most popular AI frameworks presently on the market.

(click here to see a higher resolution image)

Further reading: Understanding Edge AI Architecture